Mechanical engineers at the University of Illinois have 3D printed pure copper cold plates that could reduce a data center’s cooling energy consumption from 550 megawatts to just 11 megawatts per gigawatt of computing power. The research, published May 7 in Cell Reports Physical Science, estimates the technology would account for only 1.1% of a data center’s total energy usage, compared to more than 30% for conventional air-cooling systems.

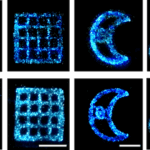

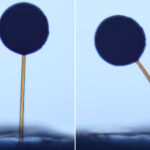

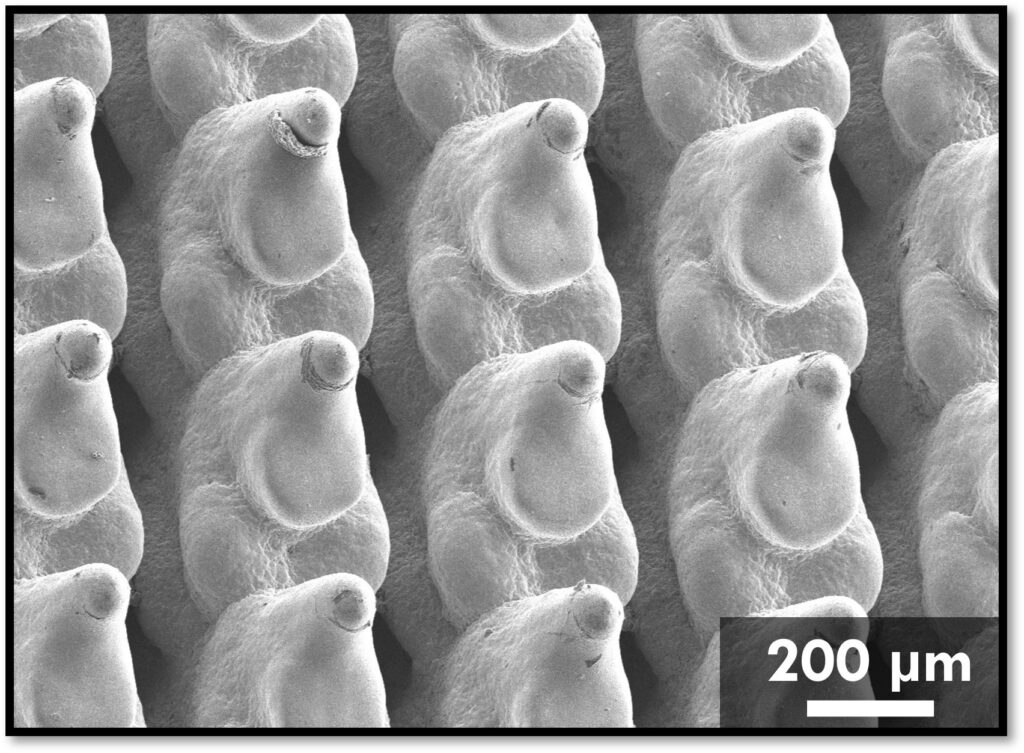

The team, led by MechSE Founder Professor Nenad Miljkovic and graduate student Behnood Bazmi, used a mathematical technique called topology optimization to design fins for the cold plates. Starting from a simple rectangle, the algorithm iterates through possible shapes, estimating cooling capability and pumping power requirements at each step until it converges on an optimal design. “Topology optimization ends up converging on a design which is optimal in maximizing thermal performance and minimizing pumping power,” Miljkovic said.

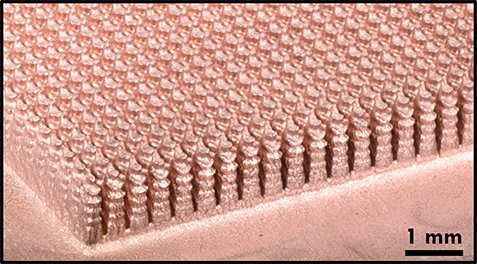

The resulting fins are sharply pointed with jagged edges, far too complex to manufacture with conventional methods. The team partnered with a company called Fabric8 to produce the plates using electrochemical additive manufacturing (ECAM), a process that deposits copper layer by layer through electrochemical plating rather than melting. That matters because pure copper conducts heat far better than the aluminum alloy (AlSiMg) or stainless steel used in most commercial cold plates, but it’s historically been too difficult to 3D print. ECAM resolves that problem, producing copper parts with details as fine as 30 to 50 micrometers, smaller than the width of a human hair.

Testing showed the optimized plates delivered up to 32% better cooling than conventional rectangular-fin cold plates, and reduced pressure drop by up to 68% at the same cooling performance level. That pressure drop reduction means less energy is needed to push coolant through the system. “By bridging the gap between computational design and manufacturing capability, our approach provides a pathway for more energy-efficient liquid cooling of chips and other electronics,” Bazmi said.

Data centers are projected to consume up to 12% of the U.S. national grid load by 2028. Air cooling has dominated chip thermal management for 40 to 50 years, but it can’t keep pace with modern high-powered processors. The researchers say their workflow can also be applied to cooling systems for other electronics and non-electronic applications at different size scales. The research was funded by the U.S. Department of Energy.

Source: mechse.illinois.edu