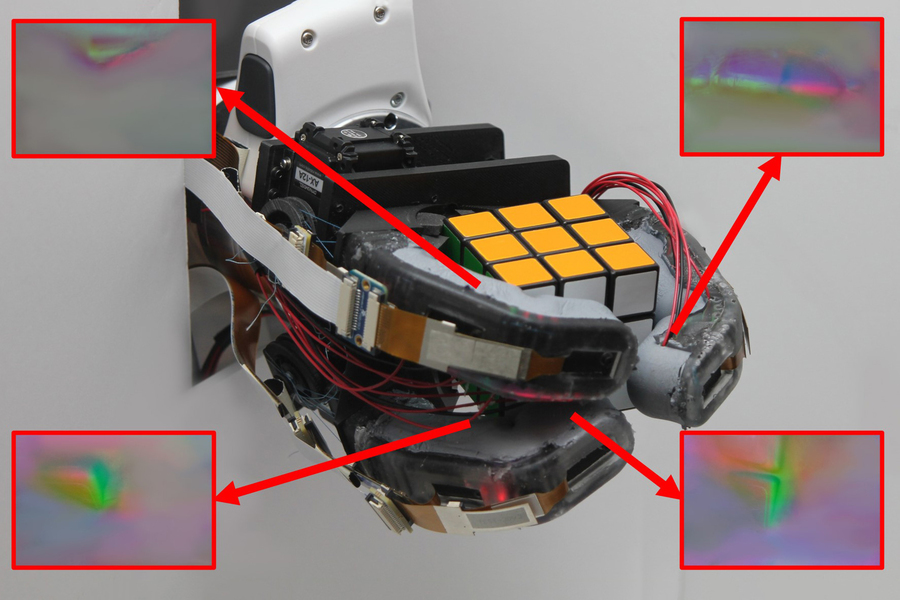

MIT researchers have developed a soft-rigid robotic hand that can accurately identify objects after just one grasp. The design features a rigid skeleton encased in a soft outer layer with high-resolution sensors incorporated under its transparent skin.

These sensors provide continuous touch sensing along the finger’s length, offering richer data about objects it grasps.

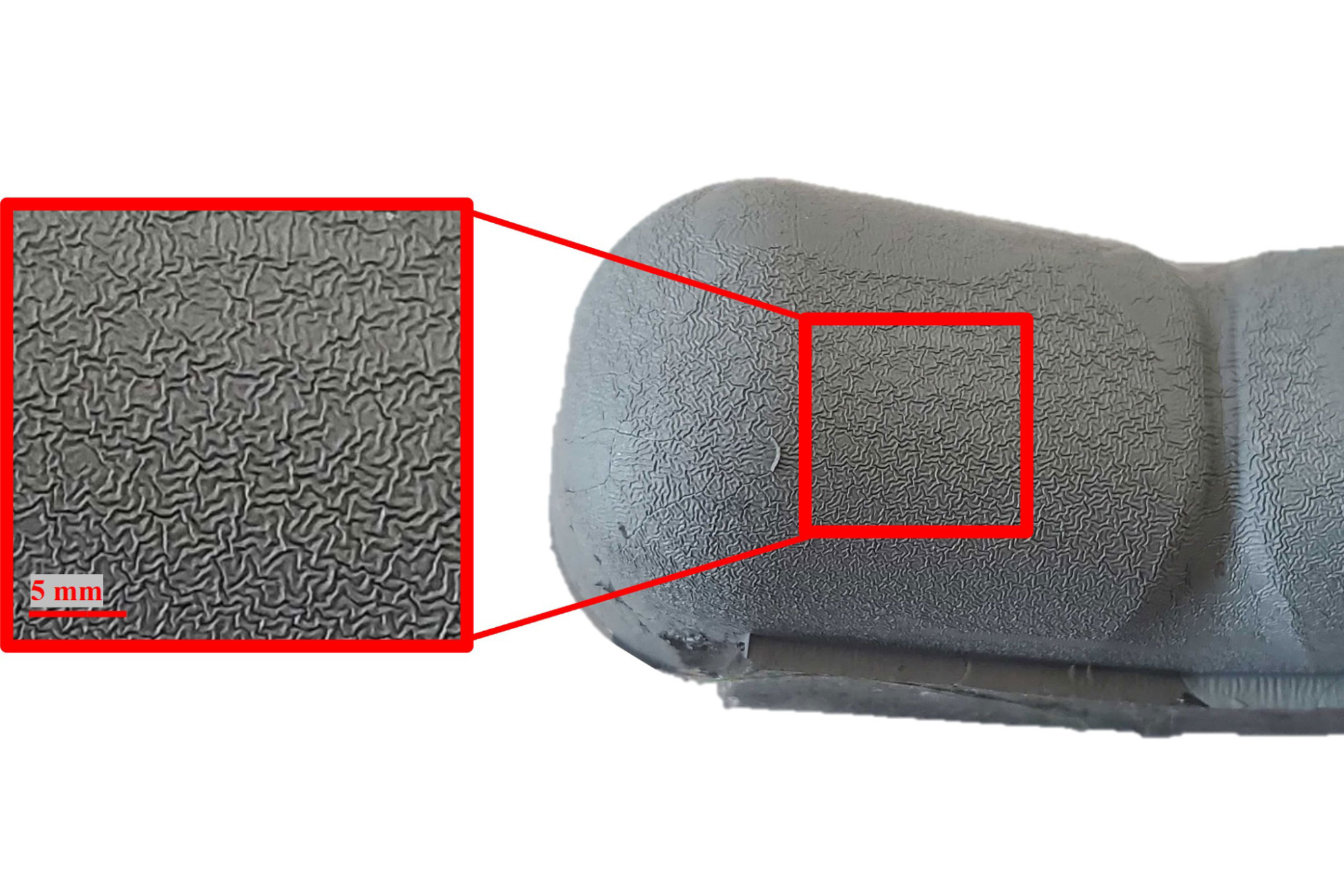

The robotic hand is made up of a 3D printed endoskeleton encased in a transparent silicone skin, molded in a slightly curved position to resemble a human hand. This design reduces the number of uncontrolled wrinkles that could impact the hand’s performance. The endoskeleton contains GelSight sensors, composed of a camera and colored LEDs, which capture images when the hand grasps an object. An algorithm then maps the contours on the object’s surface.

The system is able to recognise objects with 85% accuracy after a single grasp. This design allows the hand to pick up heavy items and securely grasp pliable objects without crushing them. Potential applications include at-home care robots for the elderly.

“Having both soft and rigid elements is very important in any hand, but so is being able to perform great sensing over a really large area, especially if we want to consider doing very complicated manipulation tasks like what our own hands can do,” said researcher Sandra Liu.

“Our goal with this work was to combine all the things that make our human hands so good into a robotic finger that can do tasks other robotic fingers can’t currently do.”

The researchers used a machine-learning model to identify objects using raw camera image data. In the future, they plan to reduce wear and tear on the silicone, improve the thumb’s actuation, and potentially add sensing to the palm for better tactile distinctions.

Come and let us know your thoughts on our Facebook, Twitter, and LinkedIn pages, and don’t forget to sign up for our weekly additive manufacturing newsletter to get all the latest stories delivered right to your inbox.